October 26, 2023

How can AI and Machine Learning secure sensitive healthcare app development data? Learn how integrating AI/ML in healthcare improves threat detection, authentication, IoT security, and more while managing risks around patient privacy.

.png)

Artificial intelligence (AI) and machine learning (ML) technologies are rapidly transforming many industries, including the healthcare app development industry. As AI/ML app adoption grows, these tools also provide advanced new ways to help secure sensitive patient health data.

The healthcare industry accumulates massive amounts of sensitive patient data from various sources like electronic health records, insurance claims, prescriptions, lab tests, wearable devices, and more.

For example, a single patient's health data may include:

- Hundreds of doctor's visits and procedure notes

- Thousands of diagnostic lab results

- Genetic test results

- Prescription records

- Insurance claims and billing details

- Data from wearables like step counts, heart rate, sleep patterns

This exponentially growing data needs stringent protections for several reasons:

- It contains highly sensitive personal health information covered by regulations like HIPAA and GDPR. Privacy breaches can lead to identity theft, insurance fraud, and other harms that damage patient trust.

- Cyberattacks and data breaches in a leading healthcare app development company are rampant, growing in frequency and severity each year. There was a 125% increase in healthcare data breaches in the U.S.

- Breaches cost healthcare organizations immensely in legal liabilities, regulatory fines, and reputational damage.

With data volumes and risks increasing every year, traditional security methods like firewalls and access controls struggle to keep pace. Advanced technologies like AI ML apps introduce smarter options to bolster healthcare app development data security.

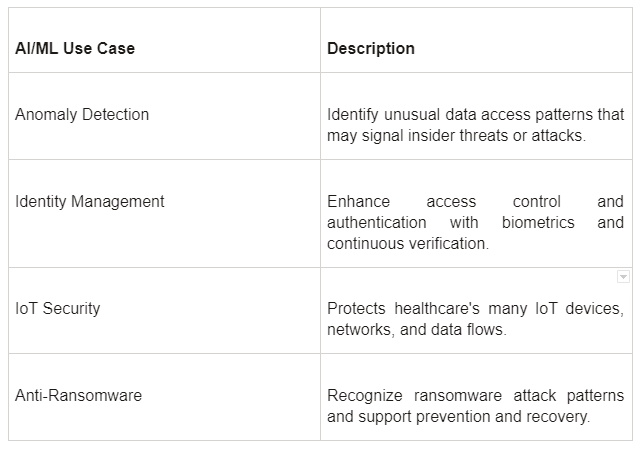

Key Use Cases for AI/ML in Healthcare Data Protection

AI and ML technologies have diverse applications for safeguarding healthcare app development data:

Anomaly detection is a vital application of AI/ML for safeguarding healthcare app development data. Here, machine learning models analyze usage patterns across systems to identify abnormal access events that may indicate insider threats, cyberattacks, or other breach risks.

For example, AI apps can detect unusual spikes in late-night database queries or large batches of data transfers as red flags.

By proactively surfacing these anomalies, potential breaches can be flagged early before damage is done.

AI techniques like biometrics and continuous authentication allow much stronger defense of access controls. Rather than just validating users at initial login, biometrics like fingerprints, facial recognition, or voice prints can verify identities repeatedly throughout a user session.

This helps thwart account takeover attacks even after access is granted, by ensuring the same authorized user remains in control.

Securing the many IoT devices applied throughout healthcare app development settings is also crucial. Patient wearables, remote telemetry, networked medical equipment, and more all contain sensitive data. Healthcare app developers can use AI apps to safeguard the connections, protocols, and data flows across this complex web of devices.

By monitoring traffic patterns, AI app development can quickly detect emerging anomalies, zero-day exploits, or other IoT-focused attacks. This allows for isolating compromised devices before threats propagate.

Finally, combating ransomware is a huge priority given the rise of the attacks.

Here AI ML app development systems can be trained to recognize typical patterns and indicators that precede a ransomware infection. This allows the attack to be blocked early before files get encrypted and systems locked down.

Additionally, AI can help contain the spread of ransomware by identifying and isolating the initial malicious files, limiting damage. AI apps further assists recovery by assessing damage patterns if an attack does succeed.

How AI/ML Improves Healthcare Data Security

AI and ML offer unique advantages for bolstering healthcare app development cybersecurity:

Rapidly analyze massive datasets to identify risks and abnormalities that humans cannot catch. This enables the detection of advanced threats that evade traditional security.

Continuously update models to identify new attack patterns and vulnerabilities before they are known. This allows real-time, proactive threat detection.

Update models and defenses continuously as threats evolve. Security can keep pace with a changing landscape.

By automating tasks like threat detection, analysis, and monitoring, ML frees up security staff for higher-value responsibilities that require human discernment and expertise.

Models become more accurate and robust as they train on more diverse and higher quality data over time. Expanding data access strengthens AI/ML app development in healthcare security capabilities.

Risks Around AI/ML and Patient Data Privacy

Despite the advantages, AI/ML in healthcare also introduces data privacy risks that must be recognized:

For example, an AI analytics tool could be used to identify high-cost patients in order to limit their care. While promoting efficient resource use, this type of application risks patient well-being without thoughtful oversight.

To minimize patient privacy risks from AI, healthcare organizations need responsible data governance. Regulatory mandates like HIPAA, which specifically governs healthcare data privacy in the United States, and GDPR, a broader data protection regulation in the European Union, should be ingrained in AI app development projects from the start, with compliance continuously monitored.

Scrubbing datasets of identifiable patient information is crucial before model development. Ongoing audits and validation after deployment can catch issues like bias or unfair outcomes before they impact patients. Healthcare app developers should also create AI ethics oversight programs, with staff focused on assessing patient risks.

AI app development systems themselves should have auditing capabilities baked in. With deliberate efforts to embed privacy protection and oversight into AI tools by healthcare app developers, patient trust can be maintained even as these advanced technologies are leveraged for better care and efficiency.

The keys are good data hygiene, transparency, validation procedures, and ethical accountability across the AI model lifecycle.

Also Read- 5 Game-Changing Use-Cases of AI in the Healthcare Industry.

AI and ML introduce powerful new techniques to enhance healthcare app development data protection. When applied thoughtfully, these technologies can significantly improve cybersecurity. However, it's also crucial to carefully manage risks around patient privacy.

On the benefits side, AI/ML app development enables healthcare organizations to keep pace with growing data volumes and ever-evolving threats. Machine learning systems can prevent attacks and catch issues that evade traditional defenses. Automating security tasks also increases efficiency.

Yet AI/ML in healthcare also warrants caution around factors like bias, transparency, and misuse. While not inherent to the technologies themselves, these risks can emerge from poor implementation. Rigorous governance and continuous monitoring help avoid pitfalls and ensure AI/ML securely serves patient interests.

Finding the right balance is key.

Healthcare organizations should adopt AI/ML in healthcare cybersecurity gradually via small, controlled pilots. Partnering with experienced providers like Consagous Technologies, a leading healthcare app development company, brings proven expertise for managing AI/ML risks.

Our healthcare app developers can collaborate to incorporate AI/ML across digital health initiatives securely. This expertise allows organizations to unlock AI's promise in improving data protection, care quality, and patient experiences.

Contact us today to get started on responsibly leveraging AI/ML for healthcare app development.

A: The benefits of using AI in healthcare data protection include faster and more accurate detection of security threats, reduced risk of data breaches, improved compliance with privacy regulations, enhanced data access controls, and proactive monitoring of data security. This can ultimately lead to better protection of patients' sensitive information and overall trust in the healthcare system.

A: Machine learning algorithms can be applied to healthcare app development data protection by training models on large datasets to identify patterns and anomalies that may indicate potential security breaches or unauthorized access. These models can continuously learn and adapt to new threats, allowing for more effective detection and prevention of data breaches in real-time.

A: AI can be integrated into existing healthcare app development organizations by implementing AI-driven security solutions and tools that continuously monitor and analyze data access, user behavior, and network activity. These solutions can work alongside existing security measures to provide an added layer of protection for patient data.